Siddhi 4.x Quick Start Guide¶

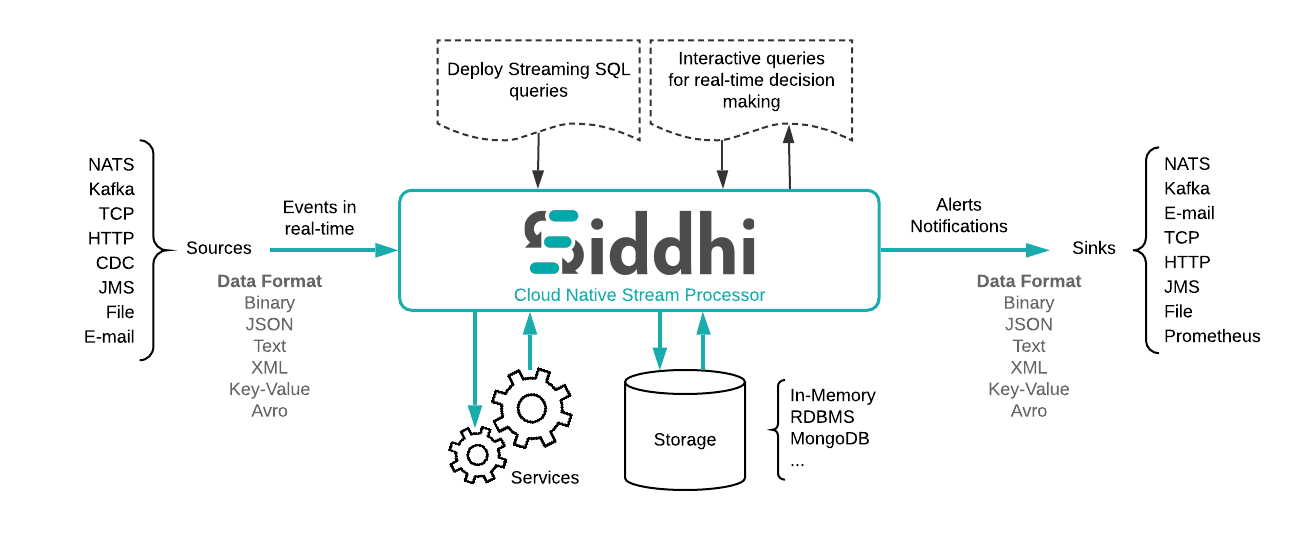

Siddhi is a cloud native Streaming and Complex Event Processing engine that understands Streaming SQL queries in order to capture events from diverse data sources, process them, detect complex conditions, and publish output to various endpoints in real time.

Siddhi is used by many companies including Uber, eBay, PayPal (via Apache Eagle), here Uber processed more than 20 billion events per day using Siddhi for their fraud analytics use cases. Siddhi is also used in various analytics and integration platforms such as Apache Eagle as a policy enforcement engine, WSO2 API Manager as analytics and throttling engine, WSO2 Identity Server as an adaptive authorization engine.

This quick start guide contains the following six sections:

- Domain of Siddhi

- Overview of Siddhi architecture

- Using Siddhi for the first time

- Siddhi ‘Hello World!’

- Simulating Events

- A bit of Stream Processing

1. Domain of Siddhi¶

Siddhi is an event driven system where all the data it consumes, processes and sends are modeled as events. Therefore, Siddhi can play a vital part in any event-driven architecture.

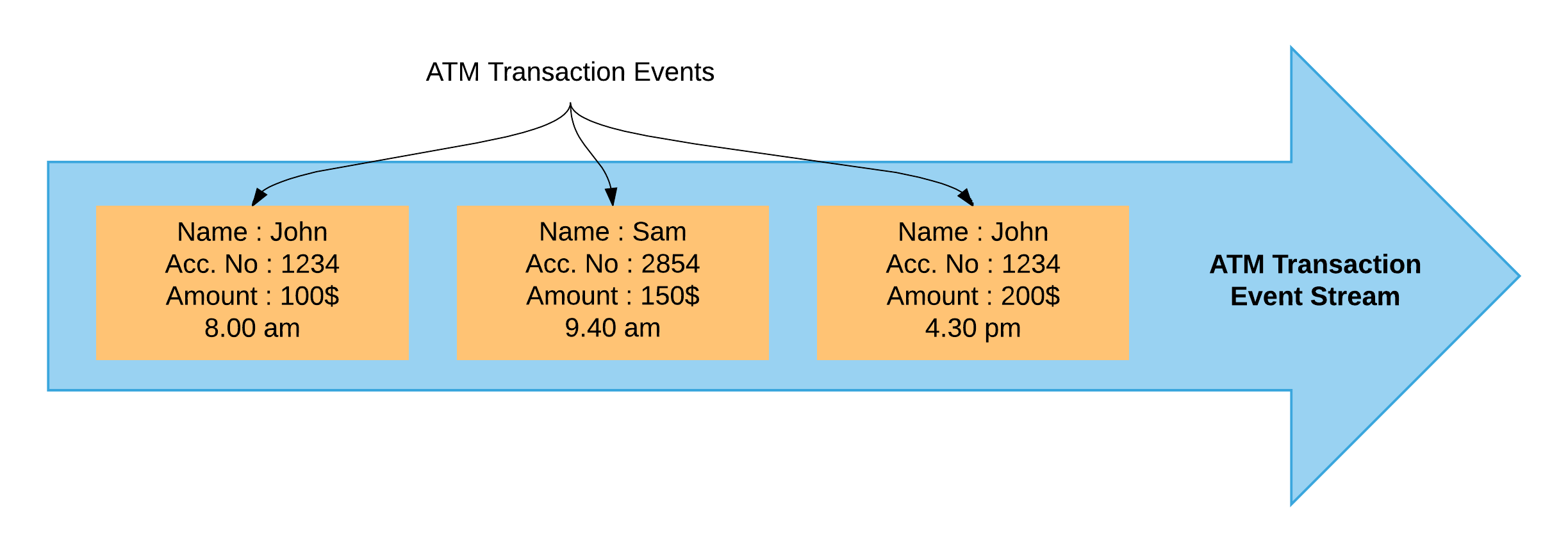

As Siddhi works with events, first let's understand what an event is through an example. If we consider transactions carried out via an ATM as a data stream, one withdrawal from it can be considered as an event. This event contains data such as amount, time, account number, etc. Many such transactions form a stream.

Siddhi provides following functionalities,

-

Streaming Data Analytics

Forrester defines Streaming Analytics as:Software that provides analytical operators to orchestrate data flow, calculate analytics, and detect patterns on event data from multiple, disparate live data sources to allow developers to build applications that sense, think, and act in real time.

-

Complex Event Processing (CEP)

Gartner’s IT Glossary defines CEP as follows:"CEP is a kind of computing in which incoming data about events is distilled into more useful, higher level “complex” event data that provides insight into what is happening."

"CEP is event-driven because the computation is triggered by the receipt of event data. CEP is used for highly demanding, continuous-intelligence applications that enhance situation awareness and support real-time decisions."

-

Streaming Data Integration

Streaming data integration is a way of integrating several systems by processing, correlating, and analyzing the data in memory, while continuously moving data in real-time from one system to another.

-

Alerts & Notifications

The system to continuously monitor event streams, and send alerts and notifications, based on defined KPIs and other analytics.

-

Adaptive Decision Making

A way to dynamically making real-time decisions based on predefined rules, the current state of the connected systems, and machine learning techniques.

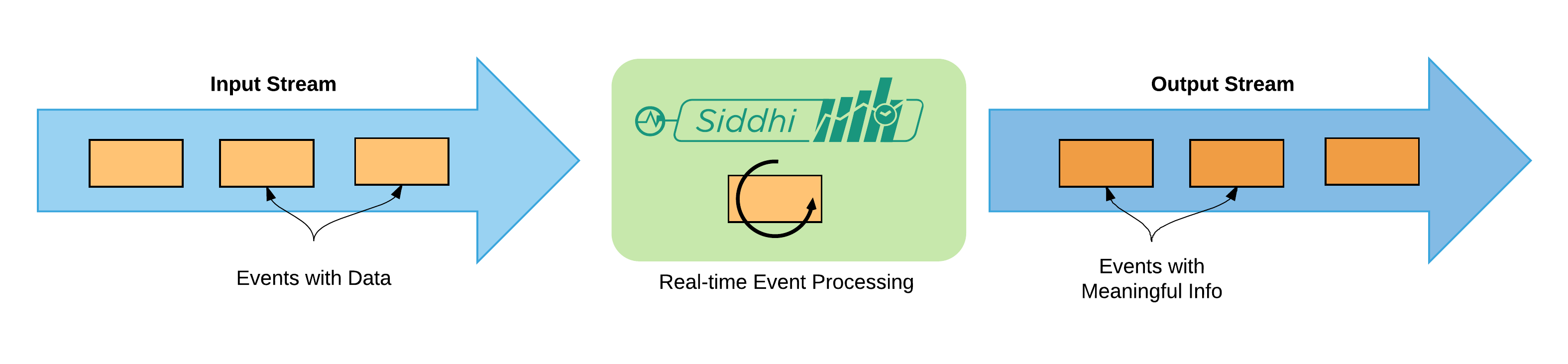

Basically, Siddhi receives data event-by-event and processes them in real-time to produce meaningful information.

Using the above Siddhi can be used to solve may use-cases as follows:

- Fraud Analytics

- Monitoring

- System Integration

- Anomaly Detection

- Sentiment Analysis

- Processing Customer Behavior

- .. etc

2. Overview of Siddhi architecture¶

As indicated above, Siddhi can:

- Accept event inputs from many different types of sources.

- Process them to transform, enrich, and generate insights.

- Publish them to multiple types of sinks.

To use Siddhi, you need to write the processing logic as a Siddhi Application in the Siddhi Streaming SQL language which is discussed in the section 4. Here a Siddhi Application is a script file that contains business logic for a scenario.

When the Siddhi application is started, it:

- Consumes data one-by-one as events.

- Pipe the events to queries through various streams for processing.

- Generates new events based on the processing done at the queries.

- Finally, Sends newly generated events through output to streams.

3. Using Siddhi for the first time¶

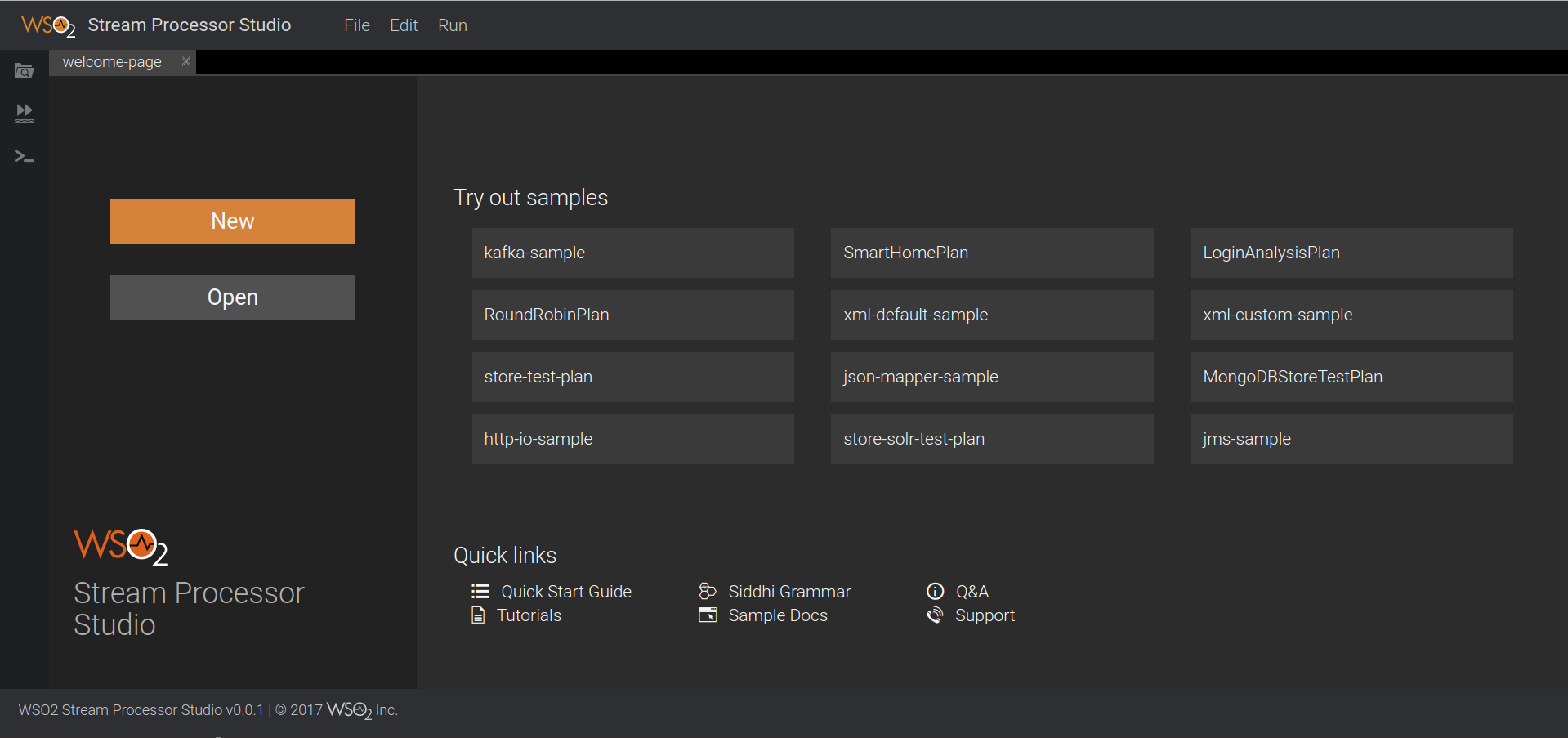

In this section, we will be using the WSO2 Stream Processor(referred to as SP in the rest of this guide) — a server version of Siddhi that has a sophisticated editor with a GUI (referred to as “Stream Processor Studio”) where you can write your query and simulate events as a data stream.

Step 1 — Install

Oracle Java SE Development Kit (JDK) version 1.8.

Step 2 — Set the JAVA_HOME environment

variable.

Step 3 — Download the latest WSO2 Stream Processor.

Step 4 — Extract the downloaded zip and navigate to <SP_HOME>/bin.

(SP_HOME refers to the extracted folder)

Step 5 — Issue the following command in the command prompt (Windows) / terminal (Linux)

For Windows: editor.bat

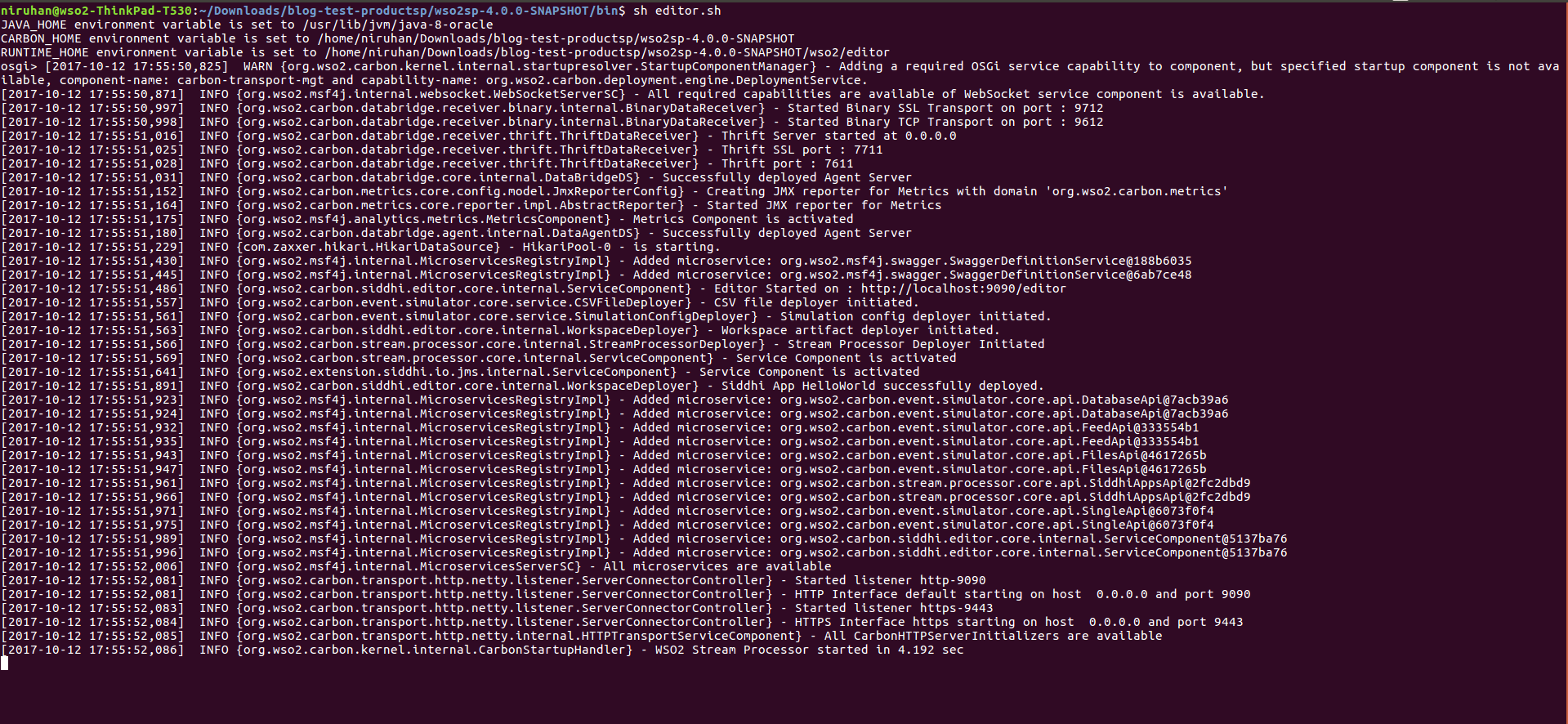

For Linux: ./editor.shAfter successfully starting the Stream Processor Studio, the terminal in Linux should look like as shown below:

After starting the WSO2 Stream Processor, access the Stream Processor Studio by visiting the following link in your browser.

http://localhost:9390/editor

4. Siddhi ‘Hello World!’¶

Siddhi Streaming SQL is a rich, compact, easy-to-learn SQL-like language. Let's first learn how to find the total of values coming into a data stream and output the current running total value with each event. Siddhi has lot of in-built functions and extensions available for complex analysis, but to get started, let's use a simple one. You can find more information about the Siddhi grammar and its functions in the Siddhi Query Guide.

Let's consider a scenario where we are loading cargo boxes into a ship. We need to keep track of the total weight of the cargo added. Measuring the weight of a cargo box when loading is considered an event.

We can write a Siddhi program for the above scenario which has 4 parts.

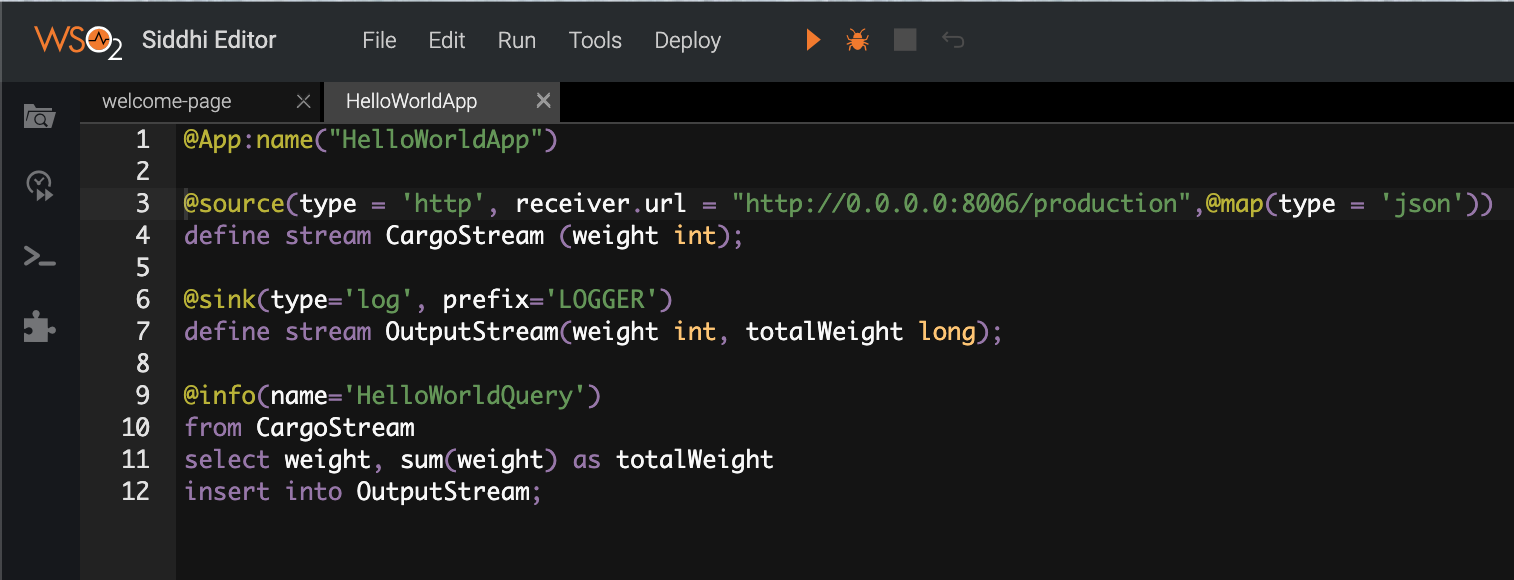

Part 1 — Giving our Siddhi application a suitable name. This is a Siddhi routine. In this example, let's name our application as “HelloWorldApp”

@App:name("HelloWorldApp")- The name of the input stream — “CargoStream”

This contains only one data attribute: - The name of the data in each event — “weight”

- Type of the data “weight” — int

define stream CargoStream (weight int);OutputStream so that we can observe the output values. (Sink is the Siddhi way to publish

streams to external systems. This particular log type sink just logs the stream events. To learn more about sinks, see

sink)

@sink(type='log', prefix='LOGGER')

define stream OutputStream(weight int, totalWeight long);- A name for the query — “HelloWorldQuery”

- Which stream should be taken into processing — “CargoStream”

- What data we require in the output stream — “weight”, “totalWeight”

- How the output should be calculated - by calculating the sum of the the *weight*s

- Which stream should be populated with the output — “OutputStream”

@info(name='HelloWorldQuery')

from CargoStream

select weight, sum(weight) as totalWeight

insert into OutputStream;

5. Simulating Events¶

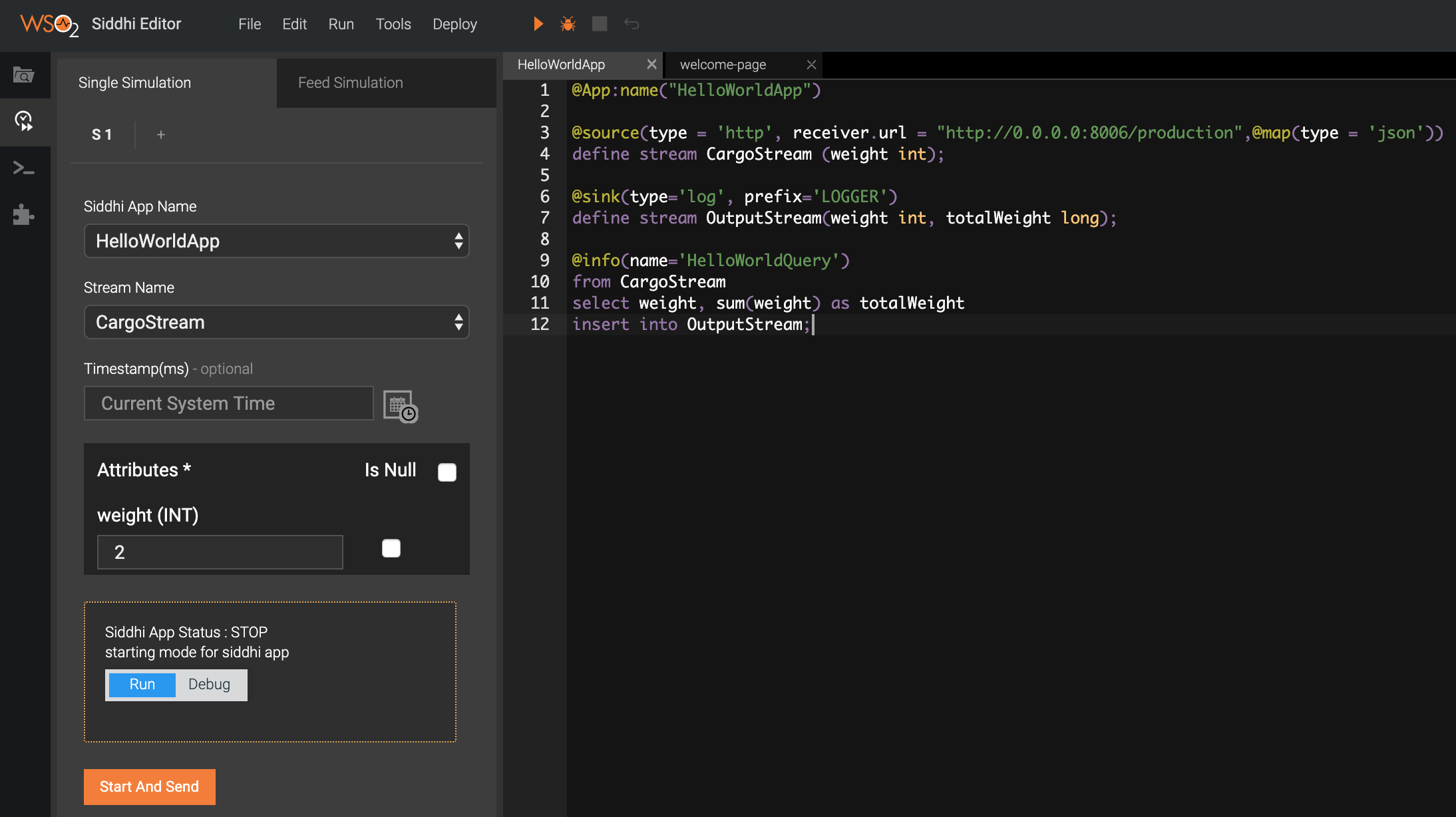

The Stream Processor Studio has in-built support to simulate events. You can do it via the “Event Simulator” panel at the left of the Stream Processor Studio. You should save your HelloWorldApp by browsing to File -> Save before you run event simulation. Then click Event Simulator and configure it as shown below.

Step 1 — Configurations:

- Siddhi App Name — “HelloWorldApp”

- Stream Name — “CargoStream”

- Timestamp — (Leave it blank)

- weight — 2 (or some integer)

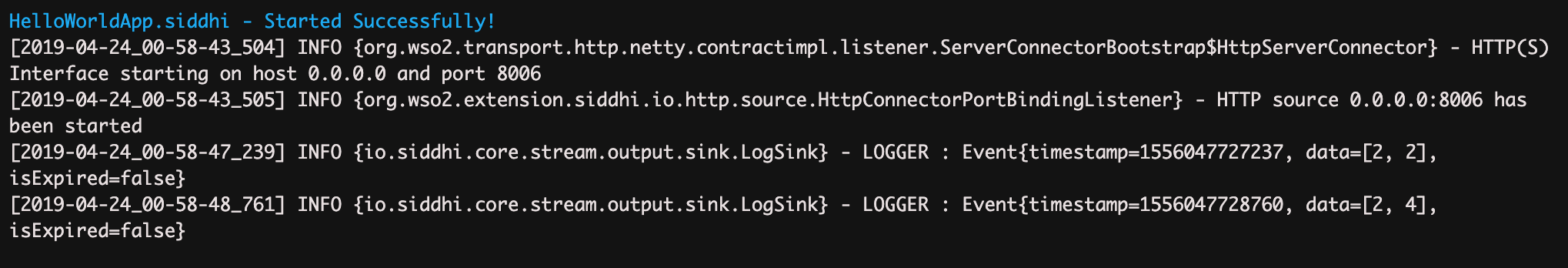

Step 2 — Click “Run” mode and then click “Start”. This starts the Siddhi Application. If the Siddhi application is successfully started, the following message is printed in the Stream Processor Studio console: “HelloWorldApp.siddhi Started Successfully!”

Step 3 — Click “Send” and observe the terminal where you started WSO2 Stream Processor Studio. You can see a log that contains “outputData=[2, 2]”. Click Send again and observe a log with “outputData=[2, 4]”. You can change the value of the weight and send it to see how the sum of the weight is updated.

Bravo! You have successfully completed creating Siddhi Hello World!

6. A bit of Stream Processing¶

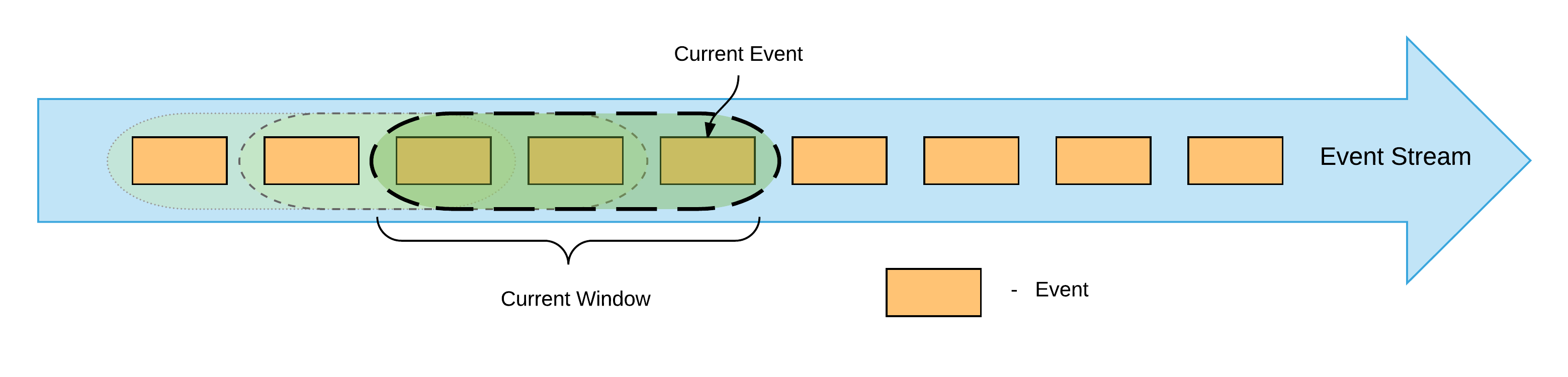

This section demonstrates how to carry out temporal window processing with Siddhi.

Up to this point, we have been carrying out the processing by having only the running sum value in-memory. No events were stored during this process.

Window processing is a method that allows us to store some events in-memory for a given period so that we can perform operations such as calculating the average, maximum, etc values within them.

Let's imagine that when we are loading cargo boxes into the ship we need to keep track of the average weight of the recently loaded boxes so that we can balance the weight across the ship. For this purpose, let's try to find the average weight of last three boxes of each event.

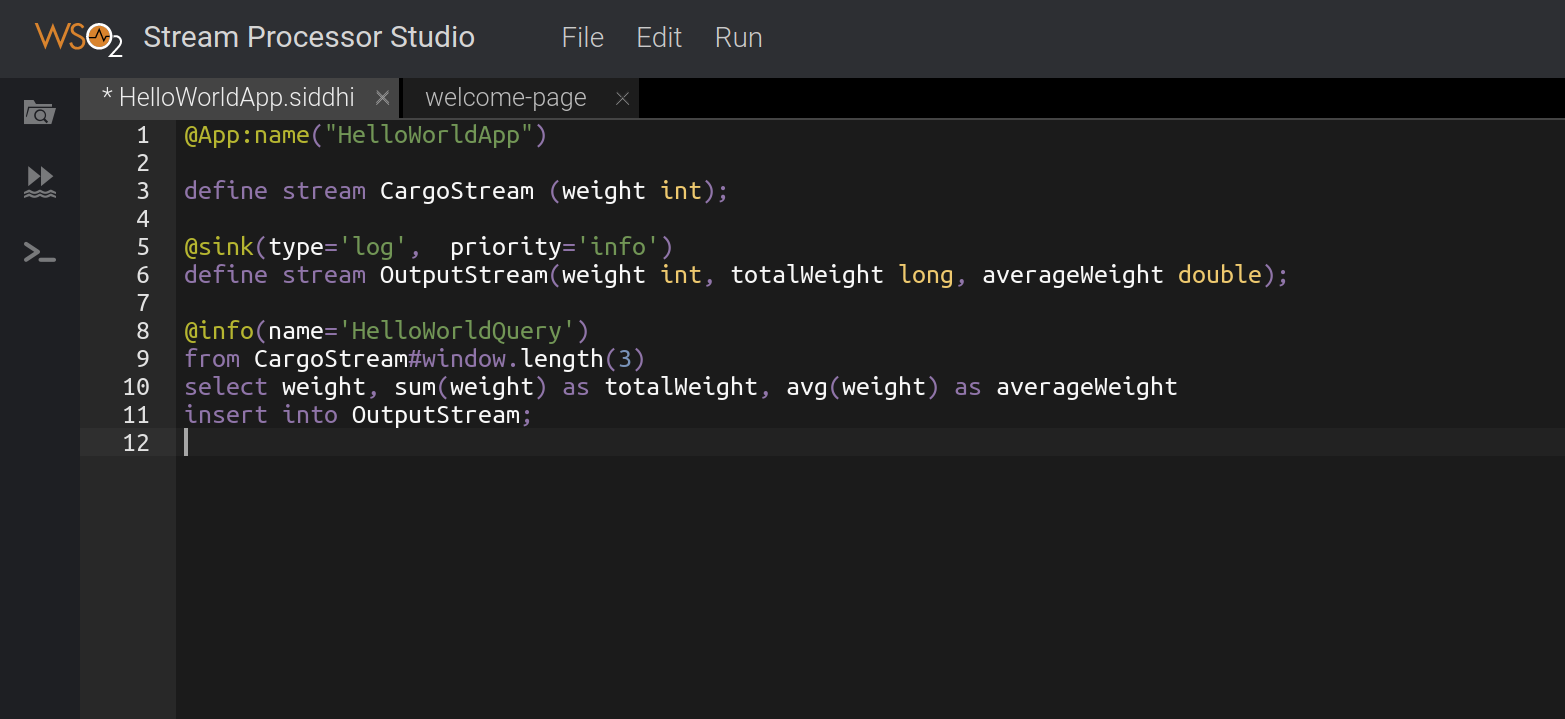

For window processing, we need to modify our query as follows:

@info(name='HelloWorldQuery')

from CargoStream#window.length(3)

select weight, sum(weight) as totalWeight, avg(weight) as averageWeight

insert into OutputStream;from CargoStream#window.length(3)- Here, we are specifying that the last 3 events should be kept in memory for processing.avg(weight) as averageWeight- Here, we are calculating the average of events stored in the window and producing the average value as "averageWeight" (Note: Now thesumalso calculates thetotalWeightbased on the last three events).

We also need to modify the "OutputStream" definition to accommodate the new "averageWeight".

define stream OutputStream(weight int, totalWeight long, averageWeight double);The updated Siddhi Application should look as shown below:

Now you can send events using the Event Simulator and observe the log to see the sum and average of the weights of the last three cargo events.

It is also notable that the defined length window only keeps 3 events in-memory. When the 4th event arrives, the

first event in the window is removed from memory. This ensures that the memory usage does not grow beyond a specific limit. There are also other

implementations done in Siddhi to reduce the memory consumption. For more information, see Siddhi Architecture.

To learn more about the Siddhi functionality, see Siddhi Documentation.

If you have questions please post them onStackoverflow with "Siddhi" tag.

Top